If you’ve worked in AWS long enough, you’ve probably had the same week more than once: an outage with no single root cause, an on-call rotation that slowly turns into a trauma bond, and a backlog full of “we should really…” tickets that never get touched because shipping wins.

That’s the cloud consultancy world today. Not because people are incompetent. Because modern cloud systems are a machine built out of a thousand Lego pieces… and half of them can move while you’re looking away.

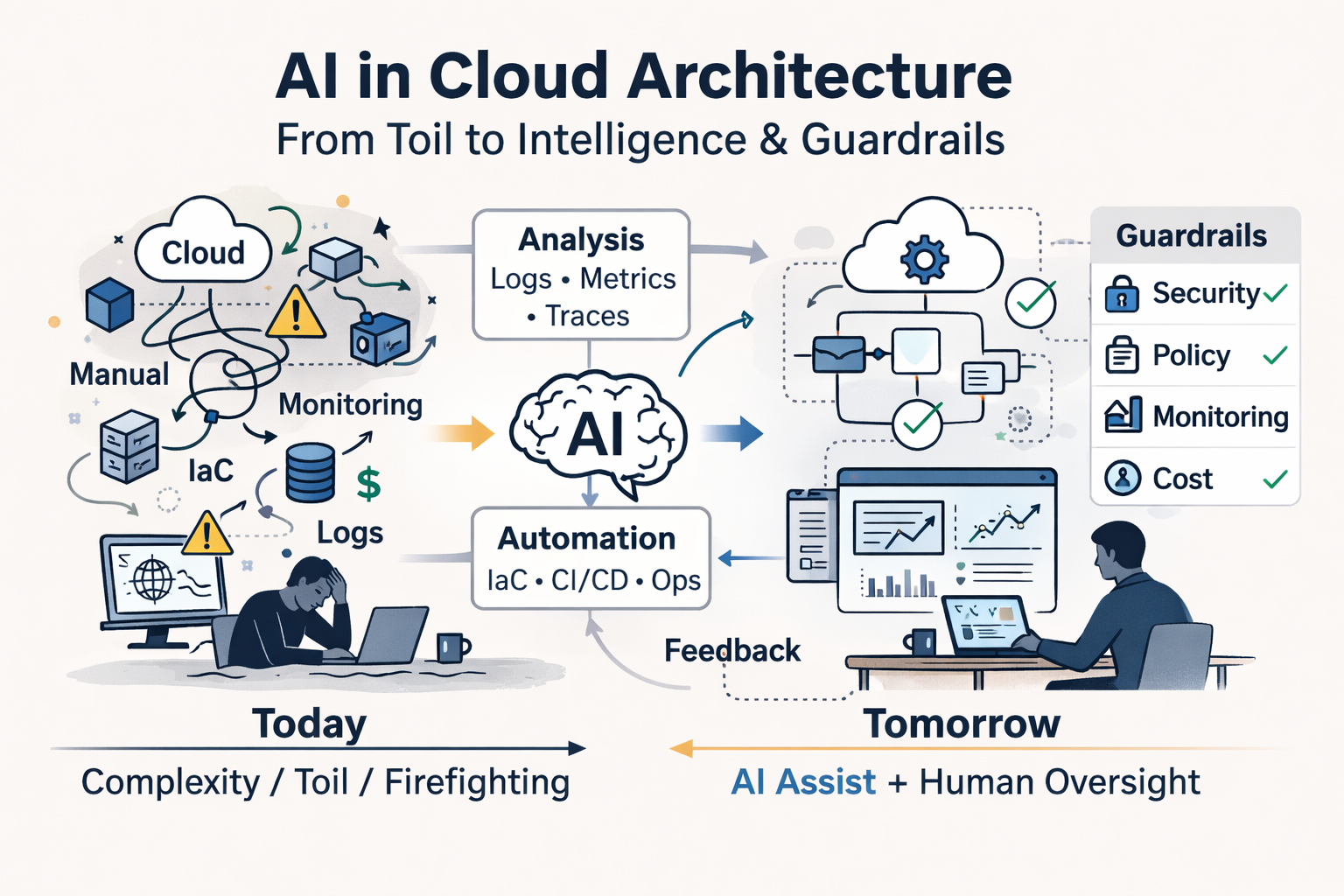

AI is landing in the middle of that mess. And it’s forcing an uncomfortable question: are we about to automate the work of cloud architects and DevOps/SRE teams, or just accelerate them into building even more complexity?

The real job: turning moving parts into a system

A lot of people still describe cloud roles like “build infra” or “set up CI/CD.” That’s the brochure. The job in practice is closer to:

- Understand what actually exists (not what the diagram says).

- Predict how it fails under load, human error, and weird edge cases.

- Put guardrails in so failures are contained, observable, and recoverable.

- Make the whole thing operable by people who didn’t build it.

The reason this is hard isn’t that AWS is complicated in isolation. It’s that everything is coupled through behaviors:

- IAM policies interact with service features, which interact with your CI permissions, which interact with human workflows.

- Networking is “simple” until you add multi-account, PrivateLink, hybrid DNS, egress controls, and a vendor that assumes public internet exists.

- Terraform is deterministic until state drift, provider quirks, and “someone clicked it in the console” show up at 2am.

- A pipeline is fine until deploy order, migrations, retries, and partial rollouts create a failure mode you never simulated.

This is why “cloud complexity” isn’t just “a lot of services.” It’s a lot of moving parts with invisible contracts.

And invisible contracts are where humans burn time.

Where AI helps immediately: compressing the boring parts of expertise

Most of a senior cloud engineer’s leverage comes from pattern recognition plus context: “I’ve seen this before, and here’s the safe path.” The problem is that getting to the safe path often requires hours of grunt work:

- Reading logs across CloudWatch, ALB access logs, application logs, traces, metrics.

- Comparing current Terraform state to what’s deployed.

- Hunting down which IAM role is assumed where.

- Mapping dependencies: which queues feed which lambdas, which services talk to which, what can be scaled independently.

- Translating “we had latency” into “we had retries that amplified load which caused connection pool exhaustion which caused a thundering herd.”

AI is extremely good at summarizing, correlating, and proposing hypotheses—if you give it the right inputs and constrain what it’s allowed to do.

Think of it as a new layer:

- Not “generate Terraform from scratch.”

- More like “take these signals and tell me what’s weird, what changed, and what to check next.”

That’s a big deal. The time sink in cloud work isn’t writing code. It’s understanding reality.

AI can compress “cloud archaeology” from days to hours.

But “agents + context” isn’t magic, because cloud work is not a text problem

The current AI pitch is often: “Give an agent tools + context, and it can do the job.” That’s partly true. But cloud architecture and DevOps have a few properties that break the simple narrative:

1) Context isn’t a single blob — it’s fragmented, conflicting, and time-sensitive

Your “context” lives in places that disagree with each other:

- Terraform repo says one thing.

- AWS account has drift.

- Runtime behavior contradicts both.

- Security policy docs are out of date.

- Tribal knowledge sits in Slack messages and someone’s head.

Agents can ingest context, sure. The question is: can they decide which context is trustworthy and when they need to re-verify in AWS?

That’s not a prompt problem. That’s an epistemology problem.

2) The blast radius is real

In cloud, a wrong change isn’t “oops, wrong answer.” It’s:

- Deleted resources.

- Broken networking.

- Escalated privileges.

- Data loss.

- A week of incident reviews and permanent scars.

So the bar isn’t “can the agent do it.” It’s “can the agent do it with guardrails that prevent expensive failure.”

3) The hard part is choosing trade-offs under constraints

Architecting isn’t selecting a service. It’s choosing between competing constraints:

- Speed vs reliability

- Cost vs simplicity

- Isolation vs operability

- Standardization vs flexibility

AI can suggest options, but it can’t own the trade-off unless you’ve encoded your business priorities and risk tolerance in a way that’s explicit and enforceable.

Most orgs haven’t.

4) Systems change because humans change them

A lot of cloud failures aren’t technical puzzles. They’re workflow puzzles:

- “The pipeline allowed it.”

- “The review process didn’t catch it.”

- “On-call didn’t have the runbook.”

- “The monitoring existed but nobody trusted it.”

AI can help with the technical layer, but the human layer still defines the system.

So no, “agents + context” won’t replace a cloud architect in the general case. It will replace a chunk of the work inside the role. The messy chunk.

What shifts in the profession: from builders to editors and safety engineers

Here’s the biggest change I see coming: cloud folks will spend less time writing and more time reviewing, constraining, and supervising.

Instead of “I’ll implement this,” the workflow becomes:

- Describe intent and constraints (security, cost, latency, compliance, SLOs).

- Agent proposes implementation (Terraform, pipeline, policies).

- Human reviews at the boundary: IAM, network, data, blast radius.

- Automated checks enforce constraints (policy-as-code, tests, drift detection).

- Agent monitors outcomes and flags anomalies post-deploy.

The cloud architect becomes closer to:

- A spec writer for intent

- A critic for edge cases

- A designer of guardrails

- A risk manager

That’s not less work. It’s different work.

And frankly, it’s the part of the job senior people already do—just with less of their time wasted on glue tasks.

Will teams shrink from 6–10 to 3–4?

Sometimes. Not always. And the reason matters.

AI will increase throughput. But throughput doesn’t translate 1:1 to smaller teams, because organizations don’t freeze scope when productivity rises. They expand it.

What I think is more realistic:

- Early-stage startups: yes, you’ll see smaller platform teams for longer. A strong senior engineer plus AI-assisted workflows can cover what used to require a few more hands. Especially if the architecture stays intentionally simple.

- SMBs with messy legacy AWS: you might not shrink headcount, but you’ll reduce time spent on diagnosis, repetitive refactors, and incident recovery. The “work” stays, but it’s less soul-crushing.

- Regulated or high-scale orgs: teams may not shrink much, because the limiting factor becomes governance, auditability, and change management—not raw implementation speed.

Also: if AI makes it easier to ship infrastructure changes, you’ll ship more changes. That increases the need for controls, not fewer people.

The “3–4 people replacing 10” story is true only if you also commit to:

- strict standardization

- fewer bespoke services

- explicit constraints

- boring architecture

- aggressive automation of validation

If you keep building snowflakes, AI won’t save you. It will help you build snowflakes faster.

The winning pattern: AI as an assistant to the control plane, not a wildcard engineer

If I were advising a cloud team (or a consultancy) on how to use AI without creating a new kind of operational debt, I’d push a few principles:

Treat AI like a junior engineer with infinite energy and zero shame

Useful, fast, and sometimes dangerously confident.

So:

- Never give it production write access without approvals.

- Require it to cite sources from your system (logs, code, configs) when making claims.

- Prefer “propose and explain” over “do.”

Put “policy” in code, not in someone’s memory

The future isn’t an AI that knows your rules. It’s an AI that can’t violate them.

Examples of constraints that should be machine-enforced:

- IAM boundaries (no wildcard admin, no privilege escalation paths)

- Network egress rules

- Encryption requirements

- Tagging and cost allocation

- Deployment safety rules (progressive delivery, rollbacks, approvals)

Focus on observability and change intelligence

Agents shine when they can correlate:

- “what changed?”

- “what broke?”

- “what’s the blast radius?”

- “what’s the safest rollback path?”

That’s where you get real ROI without betting the company on automation.

Keep architecture boring on purpose

The simplest stack wins harder in an AI-assisted world, because it reduces the search space for failure and makes “context” more reliable.

In other words: AI rewards discipline.

The uncomfortable truth: AI makes the best engineers more valuable

There’s a fear that AI flattens skill. I think it does the opposite in cloud.

Because when output is cheap, judgment becomes the scarce resource.

AI will:

- help mid-level engineers move faster,

- automate parts of senior work,

- and simultaneously increase the premium on people who can design safe systems, define constraints, and understand failure modes.

The cloud architect role doesn’t disappear. It becomes less about being the person who knows every AWS service, and more about being the person who can take ownership and knows the system overall and what could go wrong.

Closing

AI won’t end cloud consultancy. It will split it.

One side becomes “infrastructure generation”: fast, cheap, and increasingly commoditized.

The other side becomes “operational correctness”: designing systems that survive reality—bad deploys, partial outages, IAM mistakes, scaling limits, cost explosions, and humans being humans. That work doesn’t go away. It becomes the main thing.

If you’re a cloud architect or DevOps/SRE today, the future isn’t fighting AI. It’s learning to use it as leverage, while building the guardrails that keep speed from turning into fragility.

If you’re trying to do this without overbuilding, we can help.

Question: what part of your cloud work feels most “automatable” by AI and what part do you think will stay stubbornly human?